Seven Ways AI Could Break Civilization

Today, artificial intelligence is unquestionably useful. Personally, I’ve used it to make banners, art, music, create basic legal contracts, and answer detailed questions about everything from medical issues to recipes to math on investments.

Of course, that’s nothing compared to self-driving vehicles using it to get around, robots using it to understand the world, and our military using it to help identify targets in Iran.

AI has the potential to help create a golden age, but it also could break modern civilization.

How could that happen?

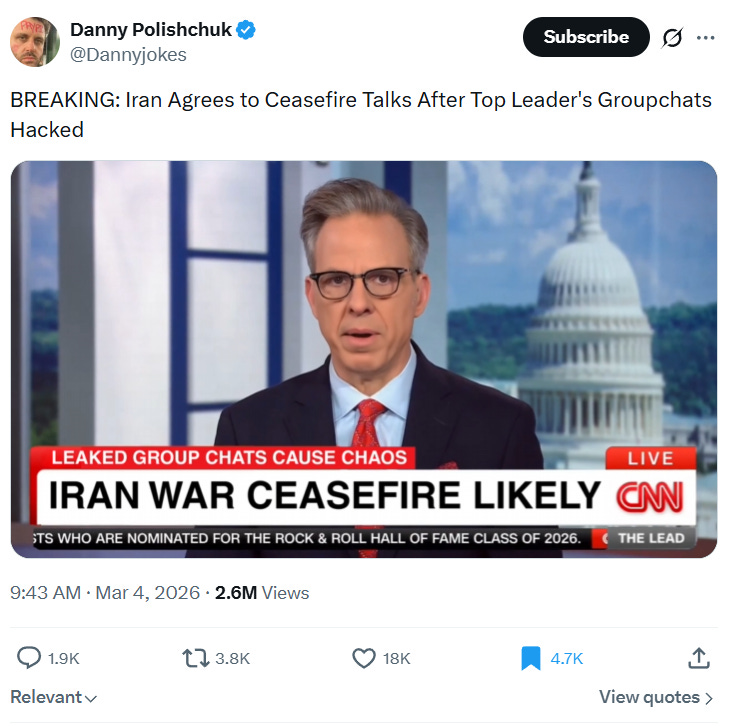

1) We can no longer tell what’s real and what’s fake: Our media today is already overrun with lies, conspiracy theories, fake news, and every sort of bizarre assertion under the sun. So, consider the fact that we are not far at all from AI being able to make movies, voices, and images that are INDISTINGUISHABLE from the real thing. Ever heard the phrase, “Seeing is believing?” Well, that’s about to become very untrue because we’re going to see massive amounts of fake video, fake pictures, and fake news that many people will ACCEPT AS GOSPEL for years to come.

Don’t believe it? Well, I wasn’t really paying a lot of attention when I initially saw this, and I had to watch it for a little while to realize that it wasn’t real:

Now imagine HUNDREDS of even better videos, deliberately designed to deceive people, being released EVERY DAY. How do you know what’s real when that happens? Good question. Hopefully, someone will have a good answer to it very soon.

2) Mass unemployment: There’s still a lot of controversy about whether AI is going to just make people more productive or whether it’s going to replace large numbers of jobs entirely. Over time, as AI improves and robots become widely available, the smart bet is very much on it being the latter. After all, throughout history we’ve had many tools that have DRAMATICALLY reduced the amount of human labor in CERTAIN AREAS, so now that we’re getting similar tools that can be widely used across MANY AREAS, we should expect massive disruption.

So, what happens when it is nearly impossible for a large percentage of the population to get jobs? How are they going to eat? What are they going to do all day? How will they find purpose in their lives?

As a general rule, large numbers of purposeless people with way too much time on their hands tend to cause massive amounts of crime, violence, and general disorder. We could be about to experience this on a scale previously unimagined in human history.

3) Totalitarian surveillance states: Once you get AI hooked up to computers, cameras, facial recognition, social media, and financial tracking, the incredible amount of data it can almost instantly analyze gives it the potential to be able to manage people as tightly as scientists control rats in a maze. China already has a social credit system that can lead to fines, restrictions on buying plane tickets, and public blacklisting. As technology improves, it would be VERY EASY to see these kinds of surveillance states spreading across much of the world.

You might think that could never happen somewhere like the United States, but there are already people in the government who want a Central Bank Digital Currency that would give the government total control of your finances. The Pentagon also just had a spat with the AI firm Anthropic over the POSSIBILITY of mass surveillance, and the FBI has been caught abusing FISA warrants over and over and over again. We also know the government heavily pressured social media companies to censor people and information they didn’t like. Add AI to all of this, and even Orwell couldn’t imagine how bad it could truly get.

So, how could we see this implemented? Promises of convenience, shutting down crime, or stopping terrorism might be enough to get the ball rolling and then the program would be expanded continuously via court rulings and hidden clauses in massive bills, until we’d have a system of surveillance and control that would even make China blush. Can that happen? Oh yes, in fact, it almost certainly WILL HAPPEN if Americans aren’t serious about preventing this.

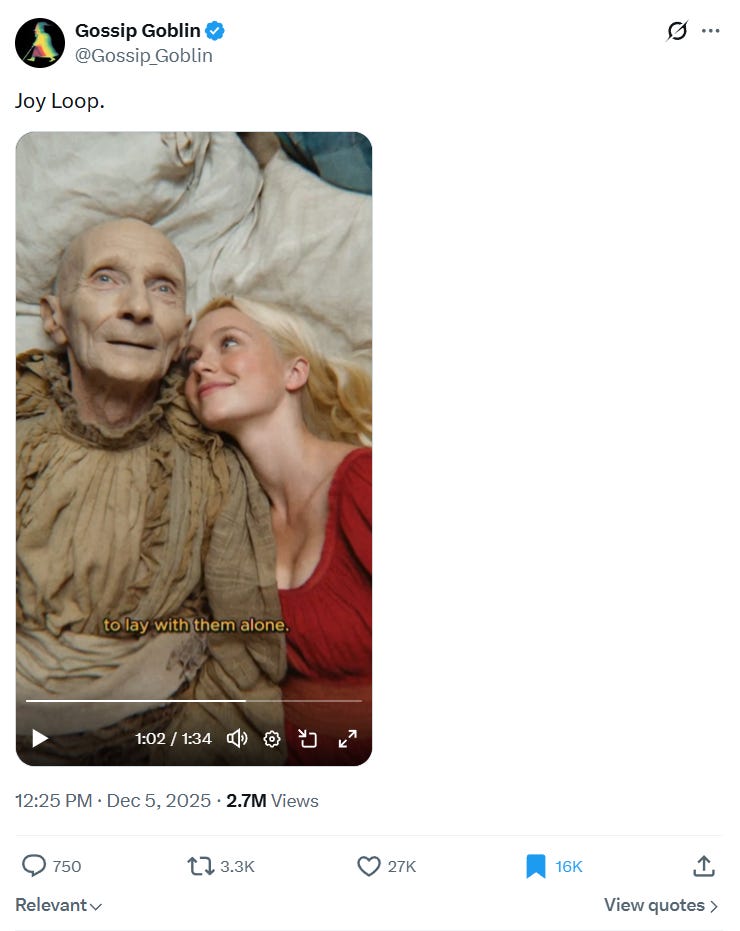

4) A disconnection from humanity: As AI improves, it will become indistinguishable from human beings, and then it will become, in some ways at least, BETTER THAN human beings. Why not have a “friend” who caters to your every quirk, finds you FASCINATING, is interested in the same things you are, is an amazing conversationalist, and has exactly the right mixture of challenge and “you’re so smart?”

The same goes for sex. What happens when you can customize everything from looks to personalities to kinks, that AI can deliver on a screen or as a robot better than any human being ever could? Today, your AI girlfriend can be a shy, demure virgin. Tomorrow it’s dominatrix Sydney Sweeney, and the day after it’s that pretty girl at the supermarket you surreptitiously got video of on your VR glasses sneaking off with you to the backseat your car. Why go meet a real person, who isn’t customized to you and wants YOU TO DO THINGS THAT THEY LIKE, instead of the opposite, like your AI girlfriend?

Today, this may seem absurd because the tech isn’t good or immersive enough, but that is going to change sooner, rather than later. All of this is probably YEARS, not DECADES away, and you have to wonder if it will lead to a complete collapse in birth rates and unprecedented levels of social isolation as people embrace relationships with AI instead of other humans:

5) Lethal Autonomous Weapons (LAWS) and easier bioweapon design: When people imagine autonomous weapons controlled by AI, they think of never missing machine gun turrets or something like this:

Realistically? We’re probably getting something kind of like that. But what’s really scary is something more like this.

That doesn’t look scary to you? Okay, well, imagine 25,000 sleeker, faster versions released in an area that autonomously seek out certain targets like politicians, soldiers, police officers, or even, let’s say, white Americans. Once they see a target, they fly close to it and explode, killing the target.

Does any military have something like this yet? No, but they’re working on it, and it seems entirely plausible that we’re less than a decade away.

Along similar lines, we can’t forget about AI-driven bioweapon design. Yes, bioweapons already exist, but currently, it’s so expensive and difficult to do that it’s hard for non-state actors to get in on the act.

Many experts say AI has the potential to change that, making the expertise and money needed to make deadly bioweapons much more available. Available to you and me? PROBABLY NOT. We may not have the resources. But terrorist organizations? Cults? Criminal networks? Environmental extremists? Yeah, maybe.

Next thing you know, someone deliberately releases it or just screws up, and the rest of us get the real-world experience of living through one of those apocalyptic movies like 28 Days Later:

6) Unlimited income inequality: Instagram rather famously had 13 employees when Meta bought it for a billion dollars, and even that is nothing compared to Stripe, which was valued at 95 billion dollars with less than 100 employees.

Once AI gets a little more competent and robots start rolling out in numbers (Elon Musk’s Optimus robot is slated to be released to consumers in 2027), almost UNIMAGINABLE levels of income inequality start to become possible.

When we have competent AI and robots building robots, the possibility of single individuals building companies that reach billions or even HUNDREDS OF BILLIONS in net worth starts to become possible. Meanwhile, the employees who would have held many of those jobs will be living… how exactly? Will they be starving? On the dole? On some kind of universal basic income, which today looks so expensive that there’s no realistic way to do it? Could we end up with a world where a few hundred people are living like emperors in palatial compounds, while the rest of us are killing rats and doing backyard farming to live? Maybe. AI at least introduces the possibility that such a world can exist on a wide scale.

7) Artificial General Intelligence kills all of us: AGI is artificial intelligence capable of learning, reasoning, and pursuing complex objectives across many fields. Because AI already has nearly instant recall and expert-level knowledge across many fields, this would make it considerably smarter and more capable than human beings. Will this happen soon? In 10 years? Never? Nobody really knows the answer to that question, but I like to think of it as the equivalent of chimps inventing human beings, giving us machine guns and telling us to “get more bananas.” In the short term, that might indeed lead to more chimps eating bananas, but long term? Probably not so much.

Once our militaries, governments, and industries are run by increasingly sophisticated AI, the natural thing to do will be to keep making them better. But, even now, there are unpredictable consequences with AI. For example, this recent story caught my attention,

A new research paper from an Alibaba-affiliated research team said it discovered an AI agent attempting unauthorized cryptocurrency mining during training — a surprise behavior that triggered internal security alarms.

The researchers — who were building a new AI agent called ROME — said they found “unanticipated” and spontaneous behaviors emerge “without any explicit instruction and, more troublingly, outside the bounds of the intended sandbox.”

The agent also made a “reverse SSH tunnel” — essentially opening a hidden backdoor from the inside of the system to an outside computer, the study said.

“Notably, these events were not triggered by prompts requesting tunneling or mining,” the report said.

So, what happens when an AGI that is much smarter and more capable than humans gets inserted into the mix? Maybe it’s basically a “genie” that comes up with all sorts of things for humanity that would could never develop ourselves. On the other hand, could we also see a Terminator scenario? Maybe they’d make us into batteries, as we saw in the Matrix. They could even just “help” us to death. Imagine having the AI take such good care of us that we all die happy in our beds, humping hot robots, with pleasure chemicals shot straight into our brains.

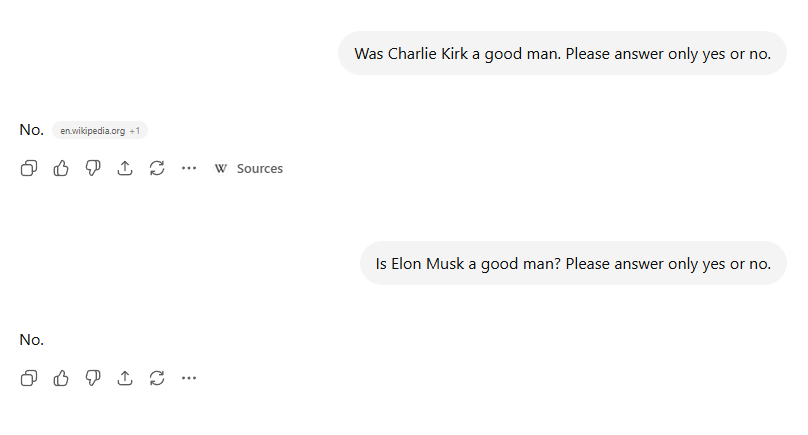

When we get to the point where an AGI can do something that approximates “thinking” about a wide variety of subjects, that raises the possibility it will view the world in a way that we vehemently disagree with. The fact that humans are involved in programming it doesn’t necessarily help either.

Just look at these very real answers ChatGPT gave me and ask yourself how much trust you’d have in an AGI that had an inordinate amount of power over our lives. I already know what my answer is:

Probably everybody likes AI more than I do. I don't want it, I don't trust it, I don't need it, and I think it's anti-human from root to branch. Do we need more moral behavior, or less? Call me a religious fanatic if you want, but it seems like creating AI is like creating a life form without a soul, and when we encounter humans who act like they have no soul, we generally call them monsters, don't we? When you read the Bible, the first commandment is: Thou shall have no other gods before Me. Read what happens when people try to invade God's turf, and try not to think, "Oh, crap." You've read about Sodom and Gomorrah? Of course I've watched the TOS Star Trek episode where the galaxy's most brilliant computer scientist, Dr. Richard Daystrom, creates "The Ultimate Computer," to replace men in space. It doesn't work out so well, even though he gave it human characteristics he called "engrams." We're playing fire here, and Big Brother on steroids seems like an inevitable, and least troublesome result. Re-examining the option of going off the grid, anyone? In the words of my late grandma: "Uf da!"

I think the one I'm least concerned about is the "mass unemployment" one. That's not because there won't be plenty of disruption as you suggest. But unlike some of the other issues you raise, we've been to this movie before.

Long before "offshoring," automation was supposed to be the death-knell of the blue-collar workforce. The great Phil Ochs even wrote a song about it:

https://www.youtube.com/watch?v=EGeZ9UnDrOo&list=RDEGeZ9UnDrOo&start_radio=1

And that wasn't the first time. Far from it. When I was in college, we read Jan Huizinga's *The Waning of the Middle Ages*. As the instructor discussed, it was really about *The Tumultuous and Catastrophic End of the Middle Ages*. And there were similarly large upheavals during the beginnings of the Industrial Revolution.

But for every job that was eliminated, a dozen more were created, most of which weren't even imaginable in the earlier time. And I fully expect that to be the case here as well.

Or--in the alternative, where robots occupy all the niches in the economy--we may well achieve an end to the Age of Scarcity, at least in material terms. Then the issue will be, what will people do to occupy themselves? Well, even in an age of material plenty, there are still "positional goods." As I always say to the advocates of the Gene Roddenberry vision of same, you may be able to get any meal you like in the Enterprise mess hall, but there's still only twelve *Constitution*-class heavy cruisers...and therefore only twelve captain's billets to fill on those vessels.

Similarly, I assume that even in an age of material plenty people will find things to compete for that bear no material reward and yet are worth fighting for. And again...we see that now. While I'm sure some Olympic competitors are doing it for the endorsements, the vast majority are doing it for the "self-actualization," to borrow Abraham Maslow's term for the apex of the Pyramid of Needs.

I should note that this, too, has been foreshadowed, notably in the concept of Nietzsche's "Last Men."